Demo

ACL Submission in 10 Days from Scratch - with an AI Scientist I Built

Decoding the DNA of AI Research Innovation

I'd been thinking a lot about training LLMs to be more creative—not just to follow instructions or retrieve information, but to genuinely brainstorm the way human researchers do: connecting the dots across existing work and finding the next opportunity.

Innovation doesn't come from nowhere, so can we decode scientific innovation? Cognitive scientists have studied creativity thinking patterns for decades. But what about AI research specifically? How do AI researchers build on prior work and transform it into something genuinely new?

If we could capture how researchers generate innovations—the recurring patterns behind how they build on prior work and transform it into new ideas—could we use those thinking trajectories to train the next generation of AI scientists to become much better at ideation?

I decided to start this project right before the new year break and submited the paper by Jan 5th. The whole research workflow is open-sourced and fully transparent.

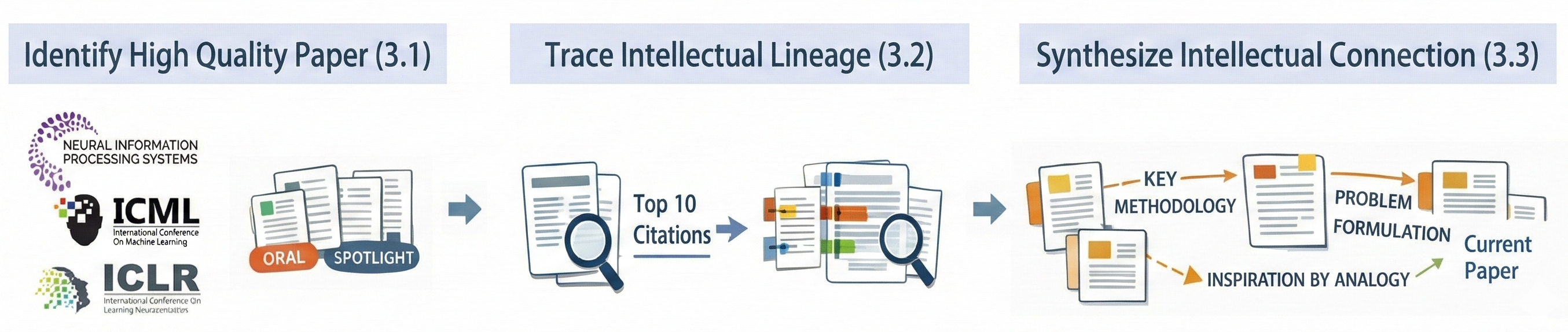

Decoding Innovation in Three Steps

Step 1: Where to find high-quality examples of research innovation?

I started with top-tier conference papers—specifically, Oral and Spotlight presentations at NeurIPS, ICML, and ICLR. These papers represent the top 1-5% of all submissions, selected by expert program committees as the most novel and impactful work. I ask my agent to systematically collect papers from 2023-2025, giving me 3,819 papers—a substantial corpus of the field's best recent work.

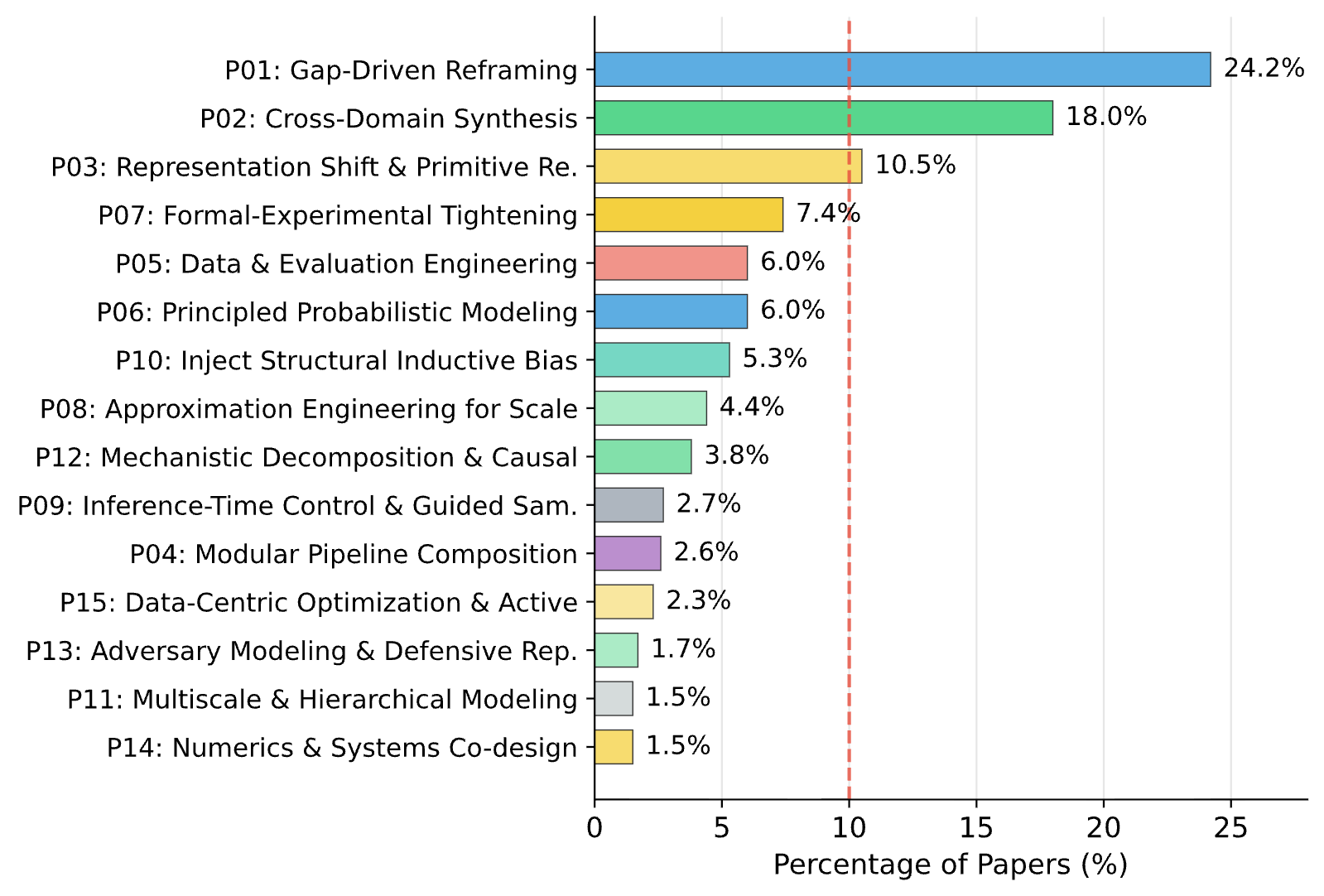

Step 2: How to extract the reasoning behind each innovation?

Every paper tells a story: here's what existed before, here's the gap we identified, here's how we built on prior work to create something new. That intellectual synthesis is usually implicit—woven into the introduction and related work sections. I asked my agent to make the reasoning trajectory explicit and structured. For each paper, I used an LLM to identify the key predecessors that formed its intellectual foundation—not just any citations, but the papers whose specific ideas, methods, or limitations directly shaped the contribution. Then it synthesized how the authors tell their story: how prior work inspires the current work, how different ideas connect, and what intellectual moves led to the innovation. For quality control, I validated the pipeline on papers where I had ground-truth knowledge of predecessors, and used multi-model cross-validation for low-confidence cases.

Step 3: Are there recurring patterns across thousands of papers?

If innovation is learnable, there should be patterns. Not every paper invents a completely new way of thinking. Researchers reuse cognitive strategies. With structured reasoning trajectories for nearly 4,000 papers, I ask the agent to ran a systematic pattern discovery workflow to identify and quantify these patterns—asking: What cognitive strategies keep appearing?

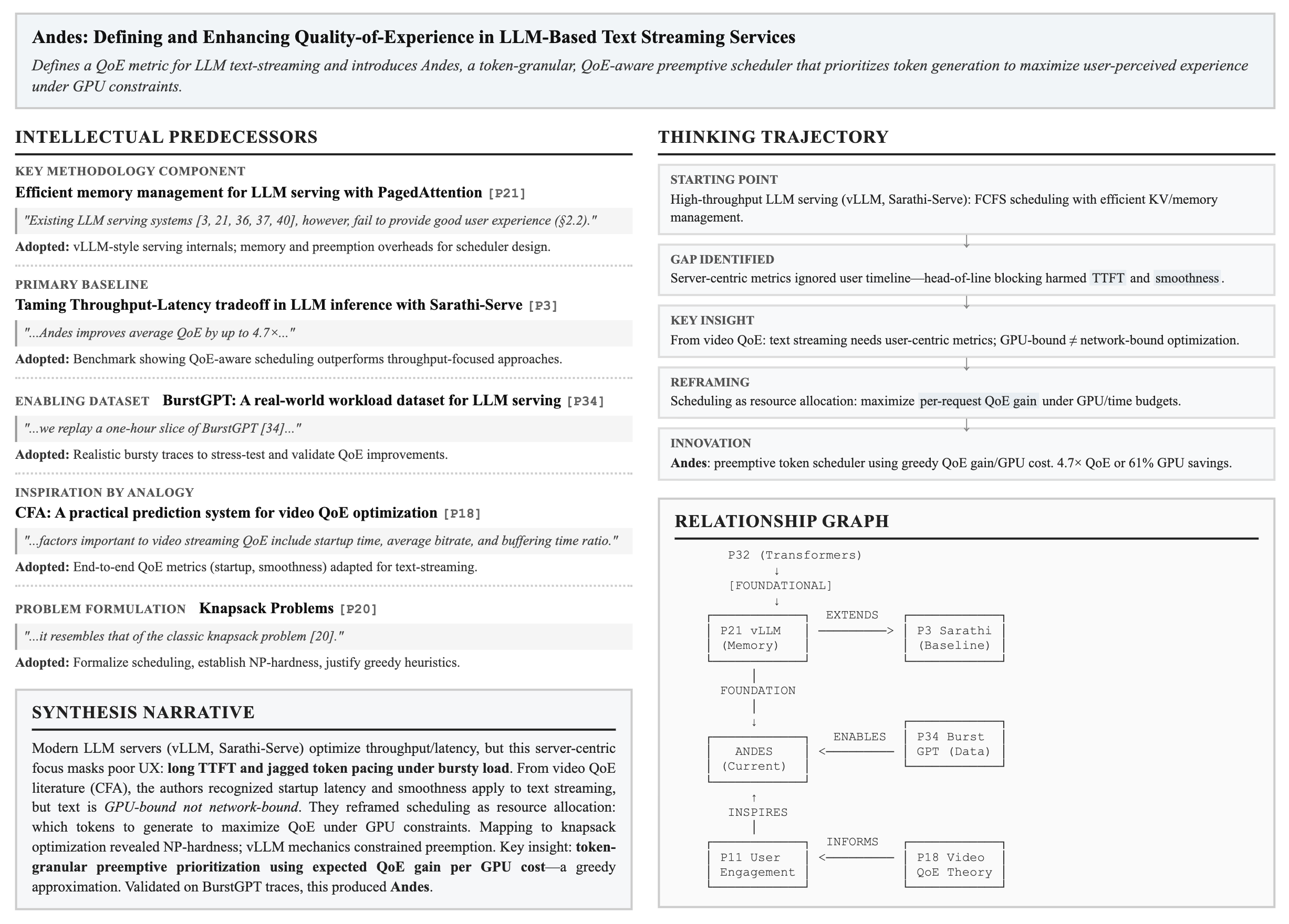

Discovery: 15 Thinking Patterns Behind Breakthrough Research

The results were more structured than I expected. Across the dataset, I identified 15 distinct thinking patterns—and three of them dominated:

Top Three Thinking Patterns

Researchers diagnose a specific limitation and reframe the problem to map onto better-suited methods. They don't just solve problems—they reshape them.

Breakthroughs often come from importing ideas from other fields and engineering compatibility layers. Borrowing and adapting, not inventing from scratch.

Changing the fundamental primitives of a problem—pixels to tokens, meshes to neural implicit functions—often simplifies everything downstream.

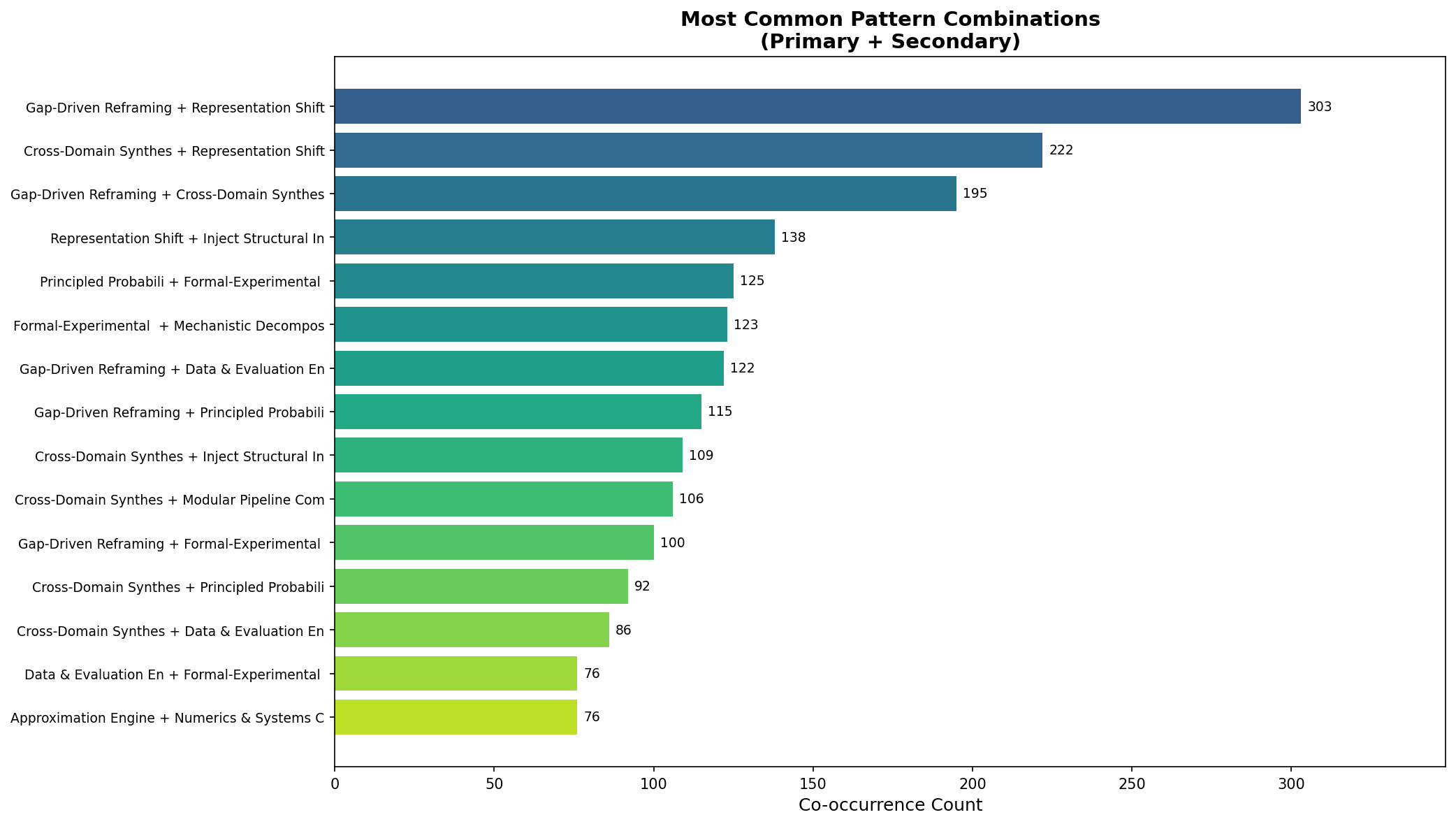

But the really interesting finding was about combinations. The most successful papers don't use just one pattern. They stack them:

Top Pattern Combinations

Diagnose a limitation, then introduce a new representation that sidesteps it.

Borrow a mechanism from another field and modify its representation for your domain.

Identify what's missing, then search for solutions in adjacent fields.

More analysis results can be found in the paper.

Sci-Reasoning: Decoding the DNA of AI Research Innovation

What started as a curiosity became Sci-Reasoning: the first dataset designed to capture the structured intellectual synthesis behind high-quality AI research.

The workflow that made this possible—from crawling papers to extracting lineage to discovering patterns—took just 10 days from initial exploration to paper submission. This was enabled by the next generation of Orchestra (to be released soon), that handles the entire research pipeline from ideation to experimentation to writing. The agent took over all the tedious work—data collection, processing, analysis—while intelligently managing the research project.

The dataset includes:

- 3,819 Oral and Spotlight papers from NeurIPS, ICML, and ICLR (2023-2025)

- Structured lineage graphs for each paper

- Synthesis narratives explaining intellectual moves

- Pattern classifications enabling quantitative analysis

Training AI Scientists: From Patterns to Predictions

Here's what gets me excited: this dataset isn't just for understanding how research happens. It's training data for AI research agents.

I ask the agent to evaluated the current LLMs' ability to predict research directions given only intellectual predecessors. Testing on NeurIPS 2025 Oral papers, Gemini 2.5 Pro achieved 49.35% Hit@10 accuracy—nearly half the time, one of ten generated ideas matched the actual published contribution.

| Model | Hit@10 (%) |

|---|---|

| Gemini 2.5 Pro | 49.35 |

| Claude Opus 4 | 42.86 |

| GPT-5.2 | 38.89 |

| Claude Sonnet 4 | 29.87 |

That's not perfect, but it's remarkable. It suggests that research ideation follows learnable patterns. Patterns we can now study systematically. Patterns we can potentially teach.

Innovation as Learnable Patterns

Innovation isn't magic. It's pattern-matching at a high level of abstraction, combined with deep domain knowledge and relentless iteration. By making scientific reasoning explicit—by transforming the implicit "how did they think of that?" into structured, queryable data—we're taking a step toward AI systems that don't just assist research, but actively participate in discovery.

Of course, there are limitations. Innovation is usually only visible as the final outcome: a polished paper with a clean narrative. The full thinking trajectory—the failed attempts, the back-and-forth exploration, the abandoned directions—is almost never captured. It's locked inside researchers' heads and scattered across months of Slack messages, whiteboard photos, and deleted LaTeX files. Capturing everything is nearly impossible. But we can capture the final successful reasoning path—the trajectory that led to a high-quality result—and that's exactly what Sci-Reasoning provides.